Quarterly Business Reviews (QBRs) were invented with good intentions: get out of the weeds, meet with your customer, and align on outcomes every quarter.

In practice? Many QBRs have become 40-slide product monologues that take weeks to build, bore executives, and don’t change much of anything.

As Aaron Thompson argues in his widely shared post “QBRs are Stupid” [1], the traditional way we do QBRs is often more about checking a box than driving real business value. But when done right—and when modern tools are involved—a QBR (or more broadly, an “Executive Business Review”) can still be one of the highest leverage motions in Customer Success, Sales, and Account Management.

This post breaks down:

- What a QBR is (and what it’s supposed to be)

- Who uses QBRs and why they matter

- The traditional steps to creating a QBR

- How QBRs are evolving (less “quarterly,” more “business review”)

- How Sturdy.ai can run QBRs for any account in seconds—not hours or days

What Is a QBR?

A Quarterly Business Review (QBR) is a structured, typically executive-level meeting between a vendor and a customer to:

- Review business outcomes and value delivered

- Align on goals, strategy, and risks

- Agree on a plan for the next period (not always a quarter anymore)

Unlike a status meeting, a QBR is supposed to focus on outcomes, strategy, and impact, not tickets, small features, or sprint updates.

Industry bodies like TSIA (Technology & Services Industry Association) and customer success leaders (e.g., Gainsight, Winning by Design) have consistently emphasized that effective business reviews should be outcome-based, data-backed, and jointly owned by vendor and customer [2][3].

Who Are QBRs For?

QBRs are heavily used across:

- Customer Success (CS) / Account Management (AM)

- To prove ongoing value

- Reduce churn and expand accounts

- Align on adoption, usage, and business outcomes

- Sales / Strategic Accounts / Customer Directors

- To maintain executive relationships

- Surface expansion opportunities

- Show roadmap alignment to strategic initiatives

- Professional Services / Consulting / Agencies

- To connect deliverables to business impact

- Discuss ROI, timeline, and next phases

- Reset expectations where needed

- Product & Executive Teams

- To hear voice-of-customer at the highest level

- Validate product direction with strategic accounts

- Identify common themes and risks across the portfolio

In modern SaaS and B2B, QBRs have shifted from a “CS-only” ritual to a cross-functional motion that spans CS, Sales, Product, and Leadership [4].

Why QBRs Matter (When They’re Done Right)

When they’re not just slidedecks for slidedeck’s sake, QBRs can:

- Prove value

Tie your product directly to metrics your customer’s executives care about: revenue, cost savings, risk reduction, NPS, time-to-value. - Protect and grow revenue

Well-run business reviews correlate with higher renewal and expansion rates because they build trust and keep your solution aligned with evolving needs [2][5]. - Align on strategy and roadmap

They create formal space to talk about: “Where is your business going?” and “How does our roadmap support that?” - Surface risk early

Adoption gaps, champion turnover, budget changes—QBRs are where these get raised and addressed proactively.

The problem is not the idea of a QBR; it’s the way traditional QBRs are executed.

The Traditional QBR: Steps, and Where They Go Wrong

Let’s walk through the typical (old-school) QBR workflow and why it’s so painful.

Step 1: Define Objectives and Audience

What’s supposed to happen:

- Clarify the purpose of the review:

- Renewal risk?

- Proving ROI?

- Expansion discussion?

- Strategic alignment with a new initiative?

- Confirm who will attend: executive sponsors, day-to-day users, procurement, etc.

- Tailor the content to those people, not a generic template.

Why it matters:

McKinsey and Gartner both emphasize executive conversations that center on the customer’s business priorities, not your internal agenda [5][6]. If you don’t decide the objective and audience upfront, you end up with a “kitchen sink” deck that satisfies no one.

Where it goes wrong:

Teams often skip this step and reuse the same template for every account, regardless of size, segment, or lifecycle stage.

Step 2: Gather Data (Usage, Outcomes, Support, Voice-of-Customer)

What’s supposed to happen:

- Pull product usage data (logins, key feature adoption, utilization vs. license)

- Capture business outcomes (KPIs, ROI estimates, improved cycle times, etc.)

- Summarize support data (tickets, escalations, time-to-resolution)

- Incorporate voice-of-customer: NPS, CSAT, survey results, call notes, emails

Why it matters:

Data-backed QBRs are more credible and effective. TSIA’s research on outcome-based engagement models shows that value evidence (data plus narrative) is a core driver of renewal and expansion [2].

Where it goes wrong:

- Data is scattered across CRM, helpdesk, product analytics, call recordings, Slack, and email

- CSMs or AMs spend hours to days cobbling it together manually

- Important context (like that frustrated email from the VP last month) gets missed because it lives outside the “official” systems

Step 3: Build the QBR Deck

What’s supposed to happen:

A concise, outcome-focused structure such as:

- Executive Summary

- Key wins this period

- Key risks and challenges

- Recommended next steps

- Your Goals & Strategy

- Recap of the customer’s stated objectives

- Any changes in their business (M&A, leadership, budget shifts)

- Value & Outcomes

- KPI trends

- ROI or impact stories

- Before/after comparisons where possible

- Adoption & Usage

- Feature adoption

- Usage by segment/team

- Gaps and opportunities

- Support & Experience

- Ticket trends

- NPS/CSAT highlights

- Themes from feedback

- Roadmap & Alignment

- Relevant roadmap items

- How they map to the customer’s goals

- Joint Plan / Next 90 Days

- Clear action items, owners, and dates

- Milestones for the next review

Why it matters:

This structure keeps the meeting focused on the customer’s business—not on an endless product tour. Gainsight and other CS thought leaders consistently recommend an “outcomes-first” format that leads with business results, not feature lists [3].

Where it goes wrong:

- The deck is 40–60 slides of feature screenshots and charts

- The story is missing: data with no narrative, or narrative with no data

- It’s built from scratch every time, burning hours of CSM and AM bandwidth

Step 4: Internal Review and Alignment

What’s supposed to happen:

- CS, Sales, and sometimes Product or Leadership review the QBR deck together

- Align on:

- Renewal / expansion posture

- Risk areas to probe

- Who will say what in the meeting

Why it matters:

Cross-functional alignment ahead of the call means you present a unified front. Research on strategic account management underscores the importance of coordinated communication across all vendor stakeholders [7].

Where it goes wrong:

- Internal prep is rushed or skipped

- Different people show up with different agendas

- The customer experiences a fragmented, reactive conversation

Step 5: Run the Meeting

What’s supposed to happen:

- Start with outcomes and their priorities, not your agenda

- Spend more time on discussion than on presenting slides

- Ask questions like:

- “What’s changed in your business since we last met?”

- “What would make this partnership a no-brainer for you next year?”

- “Where are we falling short of expectations?”

Why it matters:

Harvard Business Review and other executive communication research shows that senior leaders want vendors to:

- understand their business context, and

- co-create solutions, not just present information [6].

Where it goes wrong:

- It’s a monologue; the vendor talks for 80–90% of the time

- The “review” is mostly a product tour or roadmap dump

- Action items are vague or never captured

Step 6: Follow-Up and Execution

What’s supposed to happen:

- Share a succinct recap:

- Decisions made

- Action items, owners, and due dates

- Updated success plan

- Track progress and refer back to it in the next review

Why it matters:

Without follow-up, QBRs become “nice conversations” that don’t change outcomes. TSIA and Forrester both highlight the importance of codifying customer outcomes and success plans as part of a recurring cadence [2][8].

Where it goes wrong:

- Notes live in someone’s notebook or a random doc

- No shared source of truth for the success plan

- The next QBR starts from scratch, again

How QBRs Are Evolving

Several trends are reshaping how leading teams approach QBRs:

1. From “Quarterly” to “Right Cadence”

Not every account needs a formal review every quarter. Many organizations now use:

- Tiered cadences:

- Strategic: monthly / quarterly

- Mid-market: 2–3x per year

- Long-tail: automated or one-to-many reviews

- Event-based reviews:

- Post-implementation

- Pre-renewal

- After major org or product changes

This aligns with best practices in scaled customer success, where engagement is driven by value moments and risk signals, not arbitrary calendar quarters [3][4].

2. From “Slide Deck” to “Shared Workspace”

Instead of a static PowerPoint, teams are moving toward:

- Live dashboards (usage, outcomes, health)

- Shared success plans (in CRM or CS platforms)

- Collaborative docs with real-time notes and ownership

The review becomes a conversation anchored in live data, not a one-way presentation of stale screenshots.

3. From “CS-Only” to Cross-Functional

Sales, Product, and Leadership are increasingly:

- Joining key business reviews

- Using them to validate roadmap, gather voice-of-customer, and shape account strategy

- Treating QBR artifacts as input into forecasting, product planning, and exec reporting

This shifts QBRs from a “CS ritual” to a company-wide motion for strategic accounts.

4. From Manual to AI-Accelerated

The most important evolution: how the QBR is created.

Instead of:

- Manually pulling data from 6+ systems

- Rebuilding decks from scratch

- Hoping someone remembered that critical email or call

Organizations are now using AI and automation to:

- Aggregate all customer interactions and signals

- Summarize risks, opportunities, and sentiment

- Auto-generate QBR-ready narratives and visuals

This is where tools like Sturdy.ai fundamentally change the game.

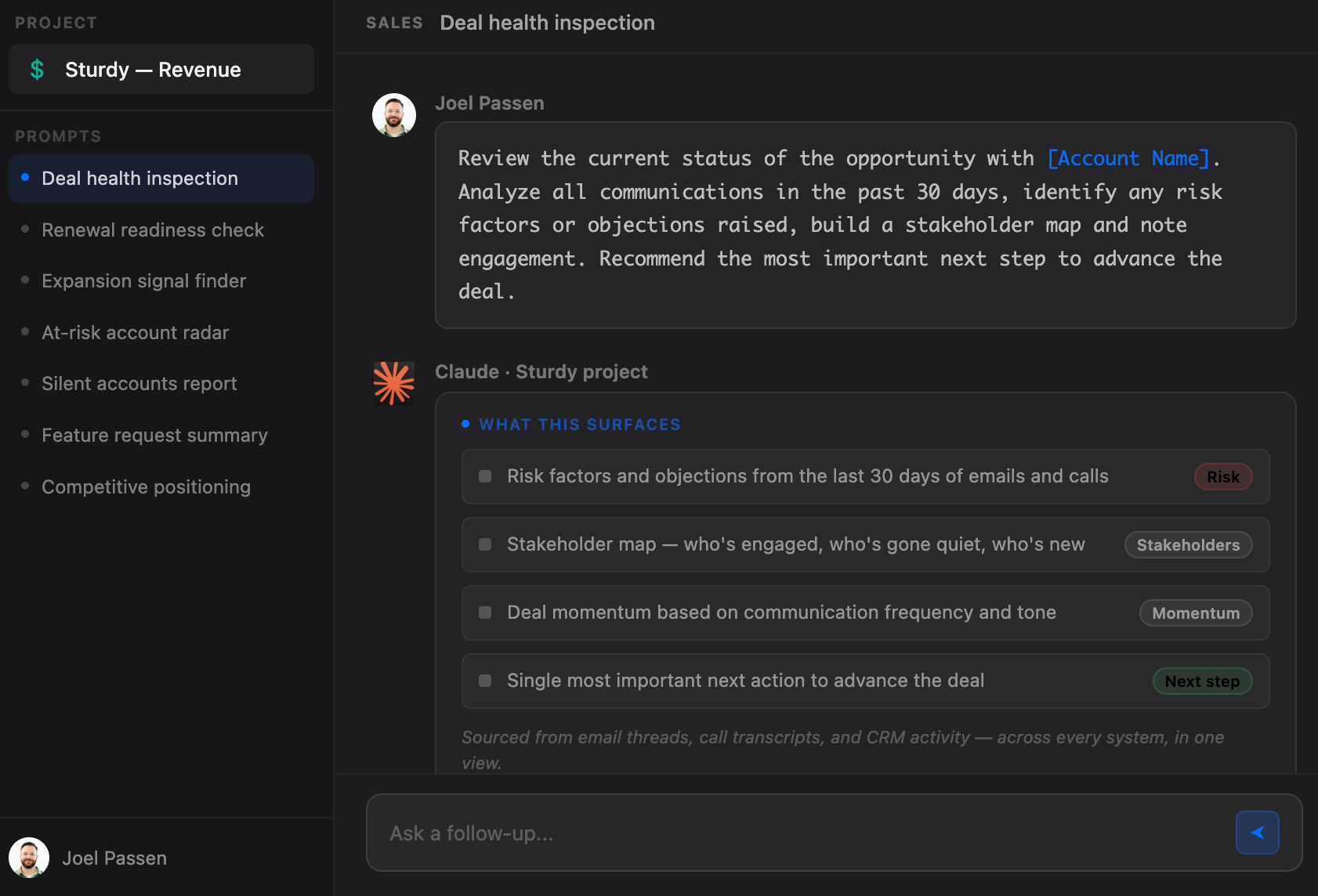

How Sturdy.ai Can Run QBRs for Any Account in Seconds

Traditional QBR prep can easily consume 5–10+ hours per account once you factor in:

- Data gathering

- Deck building

- Internal alignment

- Revisions

Multiply that across a CSM’s portfolio and it becomes obvious why QBRs either get skipped or watered down.

Sturdy.ai flips this on its head.

At a high level, Sturdy.ai:

- Ingests your real customer data

- Emails

- Call transcripts

- Support tickets

- CRM notes

- Product usage and other signals (where integrated)

- Understands what matters

- Themes and topics (requests, bugs, risk signals)

- Sentiment and urgency

- Stakeholder changes and escalation patterns

- Outcome-related language (ROI, time savings, revenue impact, etc.)

- Auto-builds QBR-ready insights in seconds

For any account, Sturdy.ai can surface:- What’s going well (wins, positive feedback, adoption signals)

- What’s not (repeated complaints, unresolved issues, risk indicators)

- Which outcomes you’ve actually helped drive

- Concrete recommendations and action items for the next period

- Generates QBR artifacts instantly

Instead of starting with a blank slide, you start with:- An executive summary tailored to that account

- Key metrics and trends pulled from your systems

- Highlighted quotes and examples from real interactions

- A suggested agenda and next-steps section

What used to take hours or days of manual prep becomes a seconds-long operation:

“Run QBR for ACME Corp.”

…and you have a structured, account-specific review ready to refine and deliver.

Why This Matters for Modern CS, Sales, and Account Teams

When QBRs are no longer time-prohibitive:

- You can run them for more accounts, not just the top 10%

- You focus on quality of conversation, not on slide assembly

- You capture real, holistic context, not just what’s in one system

- You can standardize excellence, instead of relying on heroics from your best CSMs

Instead of asking, “Do we have time to do a QBR for this customer?”, the question becomes:

“Given we can generate a review in seconds, what’s the right cadence and format for this account?”

That’s the shift from QBRs-as-admin-work to QBRs-as-a-strategic-advantage.

Bringing It All Together

- QBRs were created to align on outcomes, prove value, and co-create a plan—not to be product demos with extra steps.

- Traditional QBRs are broken because they’re manual, generic, and often misaligned with what executives actually care about.

- The fundamentals still matter: clear objectives, data-backed story, joint success plan, and strong follow-up.

- QBRs are evolving toward flexible cadence, collaborative formats, cross-functional ownership, and heavy use of data and AI.

- With Sturdy.ai, you can run QBRs for any account in seconds, pulling from the full reality of your customer interactions—not just the few metrics someone had time to find.

If you’re spending hours or days preparing for each QBR, you’re paying the “old tax” on a motion that no longer has to be that painful. The value of the QBR is in the conversation, not the manual labor behind the slides.

References

[1] Aaron Thompson, “QBRs are Stupid,” LinkedIn Pulse (discussion of common QBR pitfalls and how they fail to deliver real value).

[2] TSIA (Technology & Services Industry Association), research and best practices on outcome-based customer engagement and Customer Success motions.

[3] Gainsight, Customer Success thought leadership on Executive Business Reviews and outcome-focused customer engagement.

[4] Winning by Design and similar SaaS consulting frameworks on recurring value reviews and customer-centric cadences.

[5] McKinsey & Company, research on B2B customer value, account management, and executive engagement strategies.

[6] Harvard Business Review and Gartner, articles and research on effective executive conversations and strategic vendor relationships.

[7] Strategic account management literature and SAM programs that emphasize coordinated, cross-functional engagement with key customers.

[8] Forrester, research on customer lifecycle management and the importance of measurable, recurring value communication.

.png)